Virtual Clusters

This is a detailed guide for Virtual Clusters (Cloud Native Deployments). For an overview of all runtime types and how to choose between them, see Runtimes Overview.

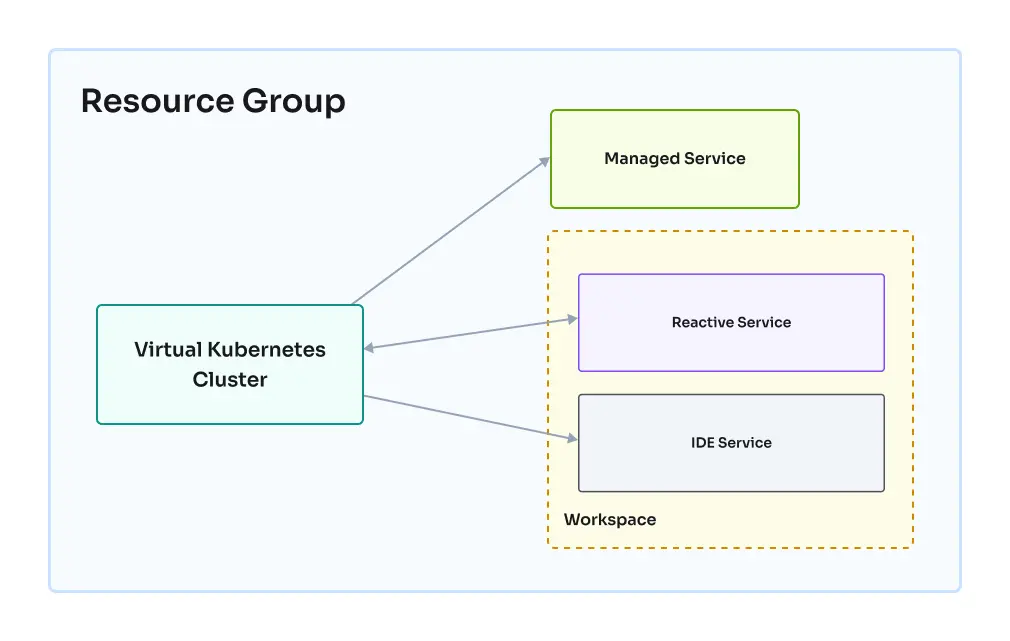

Virtual Clusters let you run Kubernetes workloads within the Codesphere infrastructure. Each Virtual Cluster provides a dedicated Kubernetes environment for your resource group, supporting standard Kubernetes workloads and tools.

Each Virtual Cluster is isolated, with its own control plane and resource limits. You can deploy, update, and remove workloads independently and securely from other resource groups.

Virtual Clusters are implemented as managed services within Codesphere. Each Virtual Cluster runs as a managed Kubernetes environment. Codesphere manages the underlying infrastructure, while Virtual Clusters provides you benefits of Kubernetes APIs and supports your native workloads and dependencies.

This feature is intended for advanced users with experience in Kubernetes. If you do not need direct access to Kubernetes primitives, consider using other type of Codesphere runtimes for simpler workflows.

Compatibility

Virtual Clusters are designed to give maximum flexibility when it comes to deploying custom workloads. You can bring your own Kubernetes dependencies and use familiar tools, such as Helm charts, to deploy applications and services directly into the Codesphere ecosystem with minimum effort required.

For example you can deploy Apache Superset in just a few steps from the Codesphere IDE or your landscape, deploying it directly to the virtual cluster:

helm repo add superset https://apache.github.io/superset

helm repo update

kubectl create namespace superset

helm install superset superset/superset --namespace superset

Connectivity

Virtual Clusters are deeply integrated with the Codesphere platform, making it easy to connect your Kubernetes workloads with other Codesphere resources. As part of the Codesphere host cluster, your Virtual Clusters can communicate seamlessly with landscapes, managed services, and other resources within the same team. This integration simplifies complex architectures and enables powerful workflows across your cloud infrastructure.

Reaching Cluster Resources

Kubernetes pods and services running inside your Virtual Cluster are accessible from any workspace within the same resource group. This means you can interact with your applications and services directly from your Codesphere workspaces, making development and troubleshooting more efficient.

To access a service running in your Virtual Cluster, you'll need to know:

- The resource name (e.g. superset)

- The namespace name (e.g. default)

- The Codesphere resource group ID (e.g. 180312) With this information, you can reach your service using a URL in the following format:

http://[RESOURCE_NAME]-x-[VIRTUAL_NAMESPACE]-x-k8s.rg-[RESOURCE_GROUP_NAME]

Access from Virtual Cluster

Your Virtual Cluster can also reach out to other Codesphere resources, such as landscapes and managed services (for example, managed Postgres) within the same resource group. This makes it easy to build complex, multi-service applications that span both Kubernetes and Codesphere's solutions.

To access a workspace reactive from your Virtual Cluster, you'll need:

- The landscape reactive service name

- The ID of the workspace where the reactive is deployed You can then use the following URL format:

http://ws-server-[WORKSPACE_ID]-[REACTIVE_NAME].workspaces

Potential Use Cases

- Deploy superset as a helm chart

- Deploy Grafana and connect it to a managed postgres service running in Codesphere

- Deploy Prometheus and connect it to your applications running in Codesphere to monitor custom metrics

- Add a custom resource definition and controllers (e.g. JSPolicy)

Cluster Access

The primary way to interact with your Virtual Cluster is through the kubeconfig file that is injected directly into the workspace filesystem. This configuration file allows you to use kubectl and helm commands directly from your workspace terminal & in the execution steps of your landscape.

The configuration file is automatically injected as ~/.kube/config into the workspace filesystem. kubectl and helm will use it as the default configuration file to connect to the Virtual Cluster.

You can automate and versionize releases in the prepare or run stage of a landscape by executing:

helm upgrade --install my-release my-charts/my-app --version 2.1.0

Triggering a config update

The Kubeconfig file is (re)injected into your workspace when any of the following events occur:

- The Workspace environment variable list is changed

- The workspace is started

- Workspace resources are updated (e.g. plan is changed, landscape is synced)

We are working on improving the synchronization of the kubeconfig file for landscapes and long-running workspaces. For now, if you encounter issues with an ousdated or missing kubeconfig, try changing an environment variable, (re)starting your workspace to trigger a fresh injection of the configuration file.

Dependencies

kubectl and helm binaries are pre-installed in the default Codesphere workspace image. If you are using a custom image, make sure these dependencies are present in case you want to interact with the Virtual Cluster.

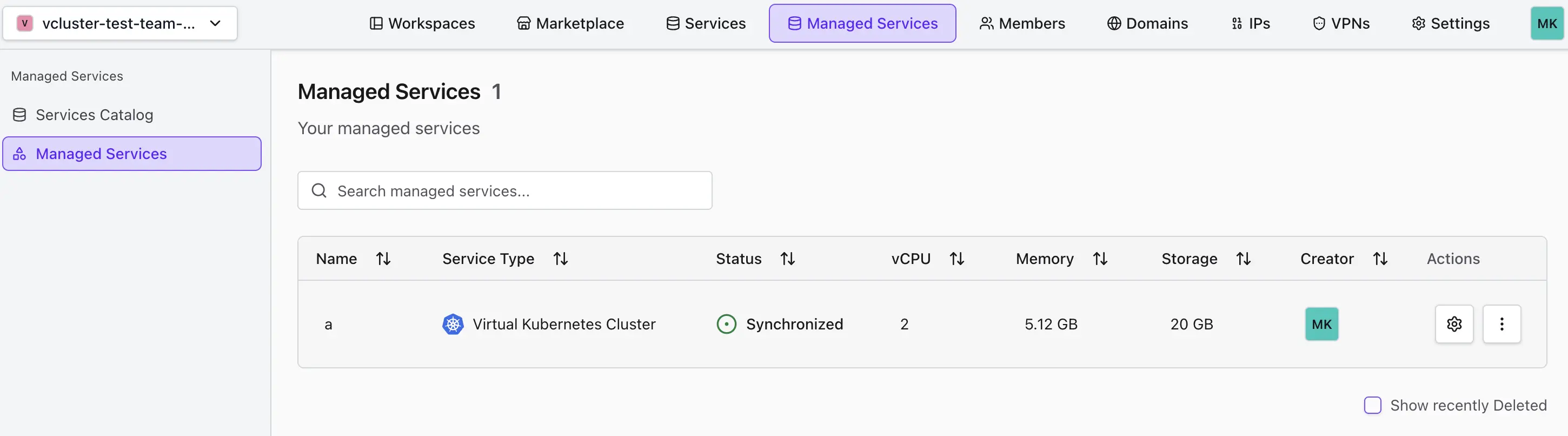

Managing Clusters

Virtual Clusters are available as a Managed Service in Codesphere. The Clusters lifecycle is managed through the Managed Services interface, where you can create, update, and delete your clusters as needed.

See the Managed Services documentation for more details.

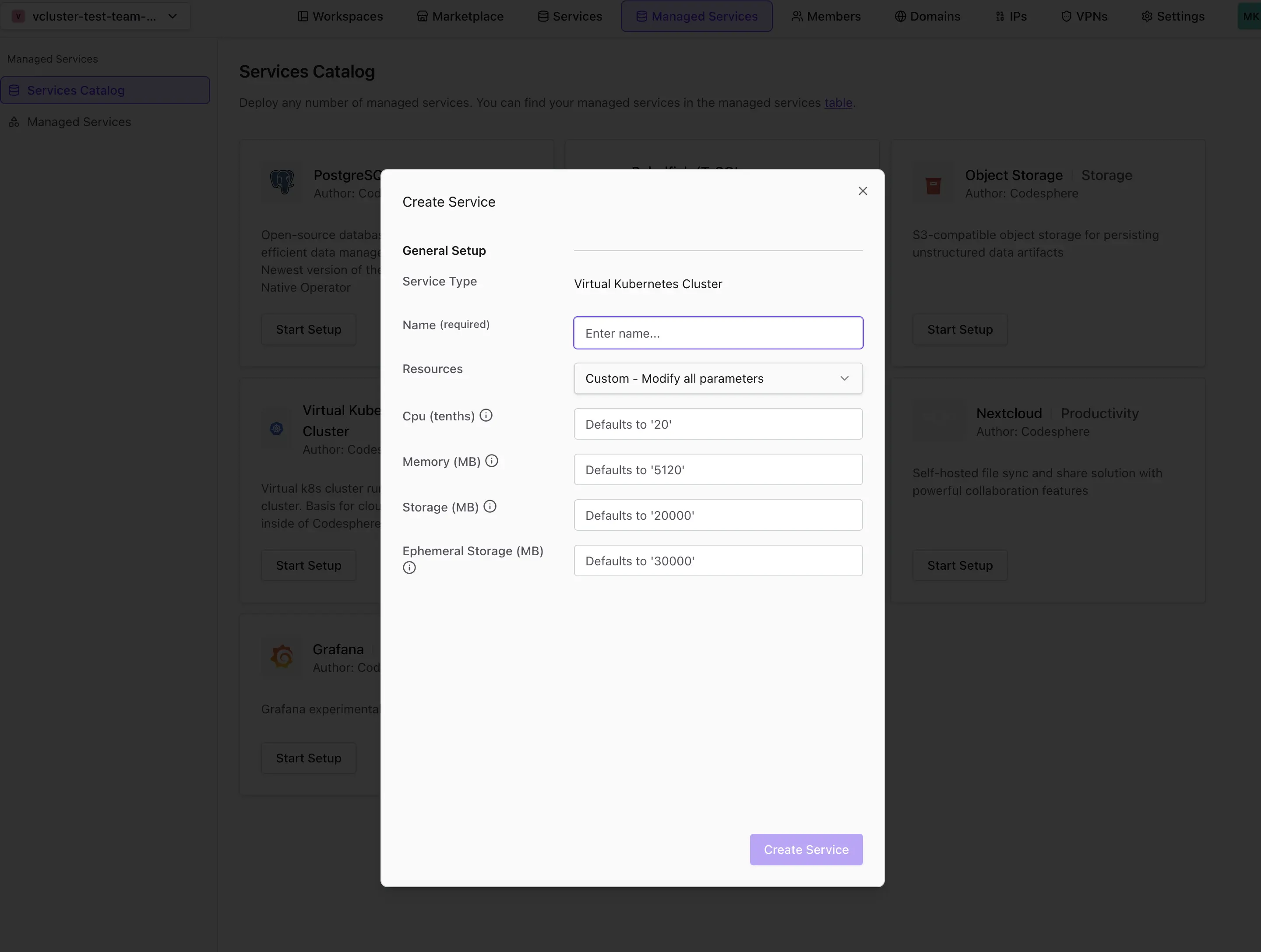

Creating a Virtual Cluster

Currently, each resource group can have only one Virtual Cluster due to the resource group namespace constraints. Before creating a new cluster, check if your resource group contains one.

To create a Virtual Cluster, follow these steps:

- Go to the Managed Services tab in your Codesphere workspace.

- Select Virtual Kubernetes Cluster from the service catalog. This option lets you set up a new cluster for your team.

- Enter a name and configure the plan according to your needs:

- vCPU

- Memory

- Storage

- Ephemeral storage

- Confirm and wait for the cluster to become ready. Provisioning may take a few moments.

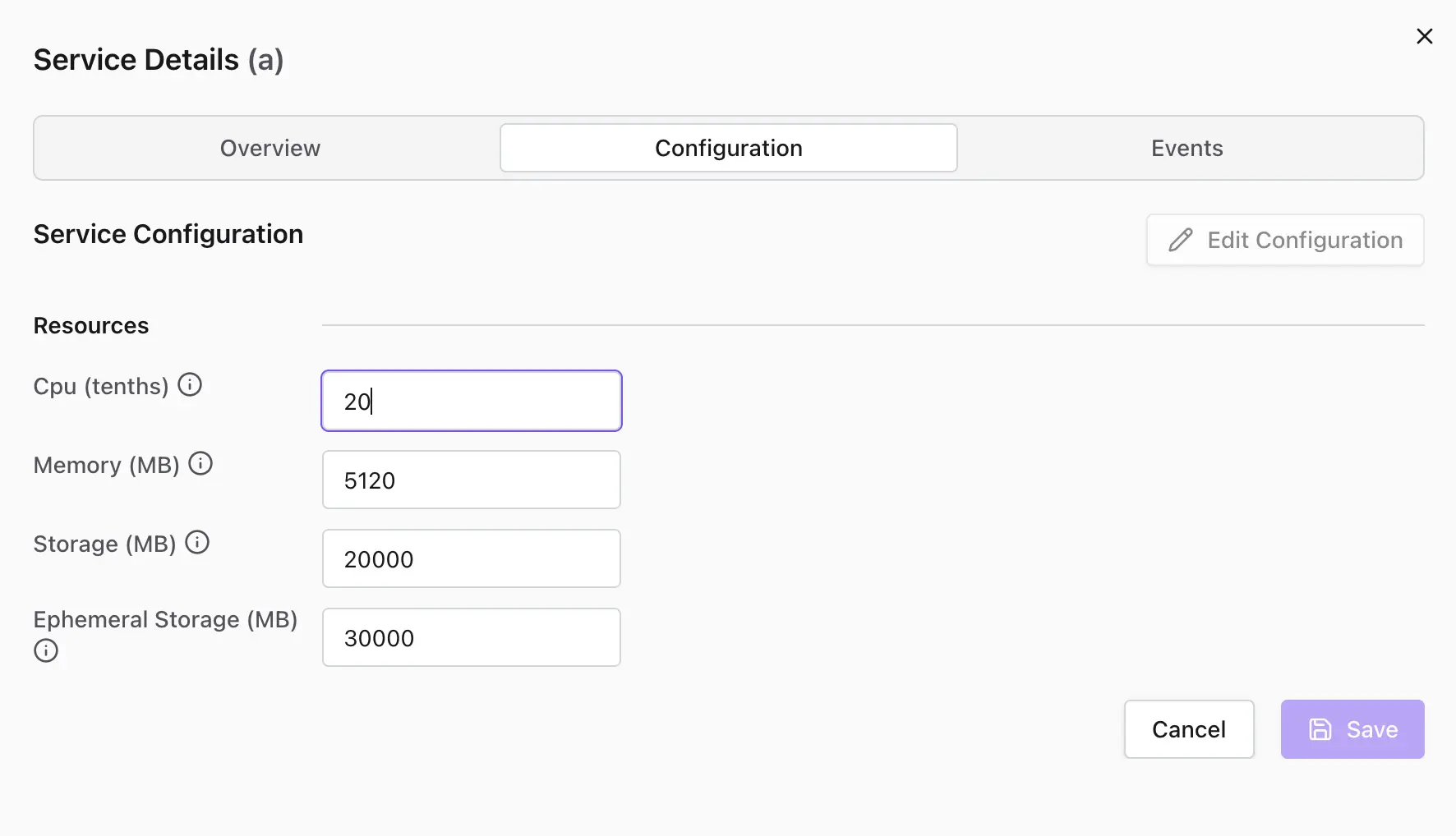

Modifying the Virtual Cluster

- Go to the Managed Services tab.

- Click Settings and open the Configuration tab for your cluster.

- Click Edit Configuration and adjust the plan values as needed.

- Confirm and wait for the changes to take effect. The cluster will update its resources accordingly.

Plans & Resources

Virtual Clusters enforce resource boundaries using Kubernetes ResourceQuota and LimitRange objects. These settings control the maximum memory, CPU, storage, and ephemeral storage available inside the cluster.

- Pods must specify resource requests and limits to ensure fair usage and scheduling

- If a pod does not specify them, the default LimitRange is enforced

The plan currently limits the accumulated resource limits across all pods, not actual resource usage. If pods cannot be scheduled, try lowering individual pod limits to fit within your plan's quota.

Current LimitRange is:

| Type | Resource | Default Request | Default Limit |

|---|---|---|---|

| Container | memory | 128Mi | 2Gi |

| Container | cpu | 100m | 500m |

| Container | ephemeral-storage | 500Mi | 6Gi |

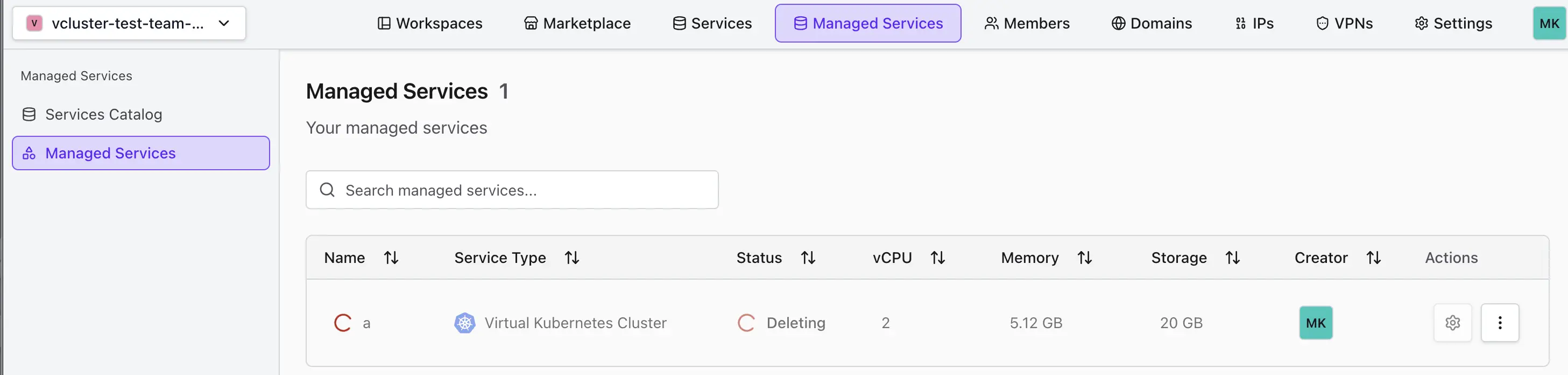

Deleting a Virtual Cluster

If you no longer need a cluster:

- Go to the Managed Services tab.

- Click Actions and select Delete for the cluster you want to remove. This action cannot be undone.

- Confirm and wait for the cluster to be removed.